How Deep Learning Is Transforming Point Cloud Analysis

Traditional vs AI-Powered Processing

Traditional LiDAR processing relies on rule-based algorithms: if the local slope is less than X and the height difference from neighbors is less than Y, classify as ground. These rules work, but they require constant parameter tuning for different environments. Settings optimized for coastal plains fail in mountains. Urban parameters produce errors in forests.

Deep learning models take a different approach. Instead of following rigid rules, neural networks learn patterns from labeled training data. Show them enough examples of ground, vegetation, buildings, and power lines, and they learn to recognize these features in new, unseen data. The result is classification that adapts automatically to diverse environments without parameter adjustment.

What Modern AI Can Do

How Deep Learning Processes Point Clouds

01

Training on Labeled Data

Neural networks learn from large datasets where every point has been manually labeled. Millions of examples teach the model to recognize ground, vegetation, buildings, and infrastructure.

02

3D Pattern Recognition

Networks like KPConv and PointNet++ process points directly in 3D, preserving spatial relationships that 2D projections lose. Each point is analyzed within its local neighborhood.

03

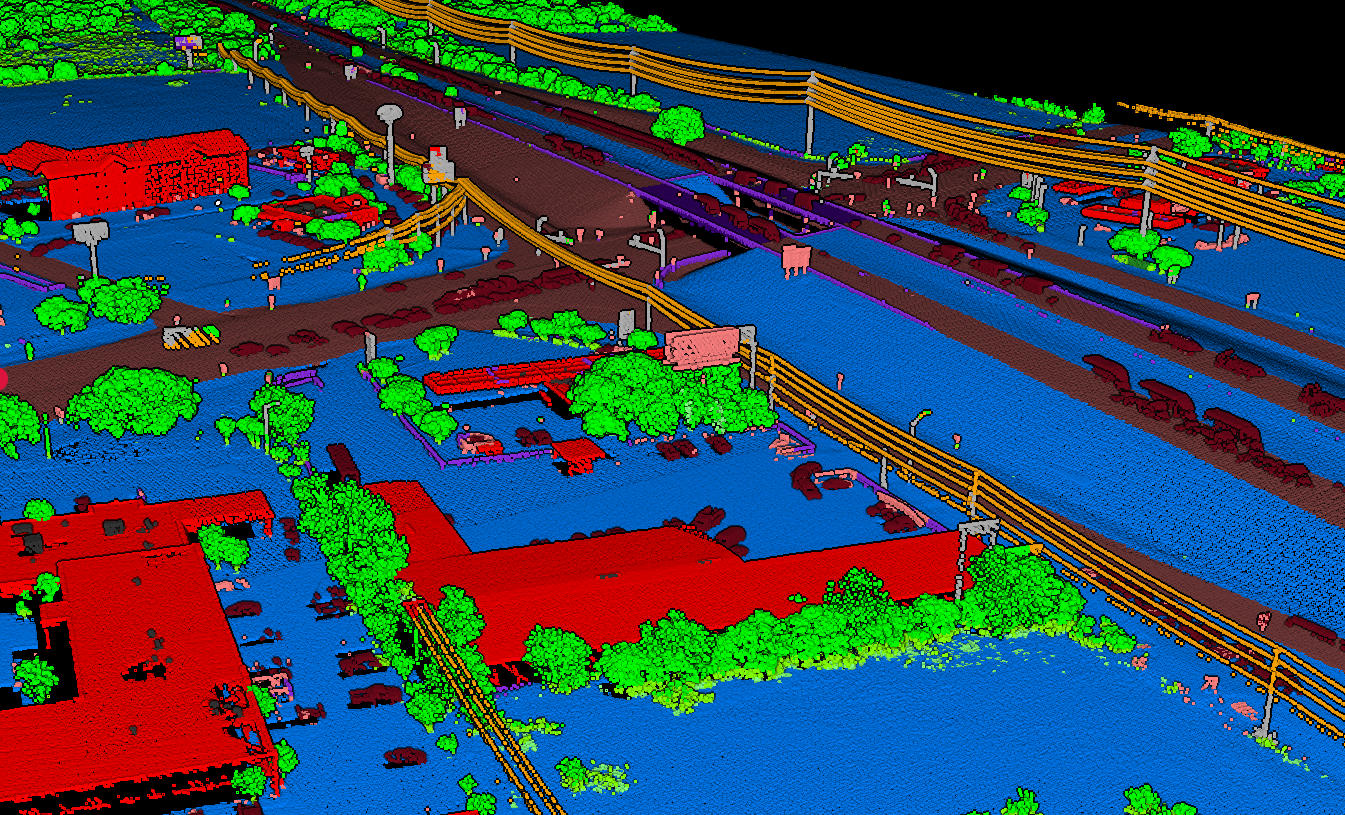

Automatic Classification

The trained model classifies each point into semantic categories: ground, low vegetation, high vegetation, buildings, and infrastructure, without any parameter tuning.

04

Cross-Environment Generalization

Models trained on diverse global datasets adapt to new terrains and land cover types automatically, from dense forests to urban centers to coastal environments.

Neural Network Architectures

KPConv (Kernel Point Convolution)

Defines convolution kernels as sets of points in 3D space, allowing the network to learn local geometric patterns. KPConv handles varying point densities naturally and achieves state-of-the-art accuracy on outdoor LiDAR benchmarks.

PointNet++

Processes raw point coordinates through hierarchical feature learning. It groups nearby points into local regions, extracts features at each level, and progressively builds a global understanding of the scene. Particularly effective for detailed object segmentation.

RandLA-Net

Designed specifically for large-scale point cloud processing, using random sampling to handle millions of points efficiently. Captures wide context through attention-based aggregation while maintaining real-time processing speeds.

Why 3D Matters

Traditional approaches often convert point clouds to 2D grids or voxels, losing fine geometric detail. Modern networks operate directly on 3D point data, preserving the full spatial structure that makes LiDAR so valuable for mapping and analysis.

AI-Powered Processing with Lidarvisor

Lidarvisor uses state-of-the-art deep learning models trained on diverse global datasets. Upload your point cloud and receive consistent, accurate classification in minutes, no machine learning expertise required.

The platform handles the entire workflow: ground classification, vegetation detection, building extraction, and infrastructure identification. Results are delivered as classified point clouds ready for export to your preferred GIS or CAD software.

Frequently Asked Questions

Modern deep learning models achieve overall accuracy above 95% on standard LiDAR benchmarks. In practice, accuracy depends on the quality of training data and similarity to the target environment. Models trained on diverse global datasets, like those used in Lidarvisor, generalize well across most terrain types and land cover conditions.

No. Cloud-based platforms like Lidarvisor handle all the complexity behind the scenes. You upload your point cloud, and the platform runs the trained models automatically. There is no parameter tuning, no model selection, and no coding required.

Rule-based methods use handcrafted thresholds (slope, height differences, point density) to classify points. They work well in controlled environments but need manual adjustment for each new site. AI-based methods learn patterns from labeled examples and generalize across different terrains and land cover types without parameter changes.

The leading architectures include KPConv (Kernel Point Convolution), PointNet++, and RandLA-Net. These networks process 3D point data directly rather than converting to 2D images or voxels, preserving the spatial structure that makes LiDAR data valuable. Each architecture has strengths for different scales and types of analysis.

Yes. Beyond classifying points into broad categories, AI models can detect and segment individual objects: trees, utility poles, vehicles, buildings, and other features. This instance-level detection enables applications like tree counting, infrastructure inventory, and asset management directly from point cloud data.

Create a FREE account now and start processing your point cloud

Get 2 GB of storage space and classify up to 10 hectares for free.